Virtualisation can help optimise resource sharing, providing improved support for low-latency applications when compared to bare-metal systems. But achieving ultra-low latency on cost-effective hardware requires strategic planning.

The aim of this article is to address challenges in network packet processing and emphasise the need for high reliability and low latency in general-purpose networks. Virtualisation, through containers and virtual machines, is a potential solution to optimise resource sharing. We can show that virtualisation effectively supports low-latency applications with minimal overhead and improved tail latency compared to bare-metal systems. However, achieving ultra-low latency on cost-effective hardware requires strategic planning.

URLLC in general-purpose networks

General-purpose networks, such as most Internet Service Provider (ISP) networks or almost all networks connected to the Internet, are based on a best-effort principle. Trying to send packets in the best effort manner as well as possible without reducing the impact on each other is trying to deliver packets through the networks as fast as possible.

However, low-latency packet processing applications are widespread in industrial scenarios and automotive driving applications requiring reliable packet delivery and low-latency. The 5G Ultra-Reliable Low-Latency Communications (URLLC) profile provides definitions to be satisfied with <1 ms end-to-end latency, and the 99.999th percentile of traffic must be within this limit.1

In prior work, we analysed the usage of commodity hardware for packet processing within systems following the URLLC profile requirements. Gallenmüller et al.2 compared packet processing on virtual machines and on bare metal without virtualisation. They identified that already with one machine in both cases, when not using tuning parameters and other optimisations, the URLLC parameters are not holding anymore. As such, to enable further processing steps usually available in general-purpose networks such as switches, middleboxes, and routers, the processing per node for packets requiring to hold URLLC needs to be reduced and stabilised.

Optimisation for commodity hardware using Linux

Wiedner et al.3 outline that potential optimisations are different depending on the virtualisation and if using user-space such as DPDK or kernel-space networking. Furthermore, reducing the latency when processing packets increases energy consumption as a significant optimisation possibility for all variants is to turn off the CPU's energy-saving mechanism and idle states to ensure that the system is always ready to process packets without delay due to waking up the system.

Furthermore, the most influential factor towards latency on both containers, lightweight virtualisation, and virtual machines, as full OS virtualisation, comes from interrupts stopping the currently executed process and requiring it to be handled immediately. Moving interrupts to cores not involved in low-latency packet processing significantly improves the performance.

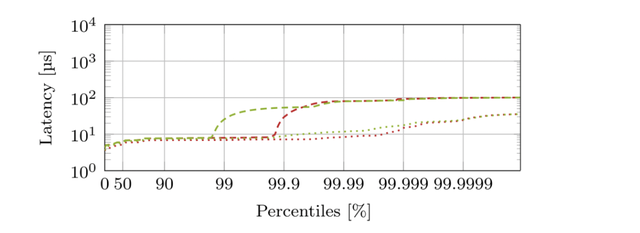

The diagram in figure 1 - based on the data published by Wiedner et al.3 - provides a comparison of optimised containers and virtual machines when processing packets towards end-to-end latency on systems of different vendors. It illustrates that after carefully optimising the system, we can achieve latencies with a big marginal towards the URLLC requirements for further processing in the network. Moreover, it shows that the remaining latency outside of queuing can be stated towards the hardware design and TLB shootdowns, which cannot be disabled. Further causes and especially high outliers can be accounted towards rescheduling interrupts.

Furthermore, Figure 1 shows an analysis based on DPDK as user-space networking; kernel-space networking reduces the amount of possible isolation with, for example, pinning and isolation of the cores dedicated to packet processing. Our analysis in different papers (see notes 2-5 below), shows that it is generally possible to use virtualised network functions towards URLLC flows, but careful planning and optimisation are required.

Recommendations

Based on the results presented in prior work, the general usage of virtualisation for ultra-low latency is possible. However, reducing influences of other parts of the system, such as the kernel, interrupts, and actions caused by interrupts, must be carefully optimised. As such, we must balance reducing resources needed for packet processing and ultra-low latency.

Generally, we recommend analysing the exact needs of the processed flows, optimising as much as needed, and utilising lightweight virtualisation as long as possible when the requirements are as restricted as URLLC, especially when sharing the device. When sharing the device, higher isolation reduces the influences between the different applications, especially towards networking.

Figure 2 presents the recommendations in a table to form a decision tree with the factors of latency, security, resources, and available cache model in the hardware.

Conclusion

To summarise our findings, we analysed the potential of processing URLLC traffic in general-purpose networks on commodity hardware to reduce the need for specialised hardware. By reducing interrupts on packet-processing cores, turning off energy-saving mechanisms, and consequently isolating those tasks from the remaining system, we can provide ultra-low latency packet processing needed to enable holding URLLC requirements End-to-End through different systems and ISP networks. Both container and virtual machines can be used for this.

Outlook

Utilising virtualisation in industry scenarios and moving those applications toward the cloud requires rethinking current networks and their design. Analysing optimisations and potentials when using commodity hardware in combination with specialised hardware is further needed.

Furthermore, we focus on analysing and improving our insights into low-latency packet processing in different hardware machines to provide recommendations and potentials when reusing existing hardware to provide future networks, including virtualised, shared network components. An important aspect is to analyse the different available solutions in a structured and precise manner to allow valuable insights for network operators and management.

Notes

1. 5G: Study on Scenarios and Requirements for Next Generation Access Technologies, Last accessed: May 22, 2023 (2017).

2. S. Gallenmüller, J. Naab, I. Adam, G. Carle, 5G QoS: Impact of Security Functions on Latency, in: NOMS 2020 - IEEE/IFIP Network Operations and Management Symposium, Budapest, Hungary, April 20-24, 2020, IEEE, 2020, pp. 1–9.

3. Florian Wiedner, Max Helm, Alexander Daichendt, Jonas Andre, Georg Carle, “Performance evaluation of containers for low-latency packet processing in virtualized network environments,” Performance Evaluation, vol. 166C, no. 102442, Aug. 2024.

4. Sebastian Gallenmüller, Florian Wiedner, Johannes Naab, Georg Carle, “How Low Can You Go? A Limbo Dance for Low-Latency Network Functions,” Journal of Network and Systems Management, vol. 31, no. 20, Dec. 2022.

5. Florian Wiedner, Alexander Daichendt, Jonas Andre, Georg Carle, “Control Groups Added Latency in NFVs: An Update Needed?,” in 2023 IEEE Conference on Network Function Virtualization and Software Defined Networks (NFV-SDN), Nov. 2023.

Comments 0